MITRE ATT&CK

What is MITRE ATT&CK™?

MITRE ATT&CK is a knowledge base that maps how attackers operate, using categorized tactics and techniques based on observed behavior.

It provides a structured way for security teams to understand how attacks unfold across the full lifecycle, from initial access to data exfiltration.

Unlike traditional security models that focus on tools or signatures, ATT&CK focuses on behavior, making it more effective for detection and response.

MITRE introduced ATT&CK (Adversarial Tactics, Techniques & Common Knowledge) in 2013 as a way to describe and categorize adversarial behaviors based on real-world observations. ATT&CK is a structured list of known attacker behaviors that have been compiled into tactics and techniques and expressed in a handful of matrices as well as via STIX/TAXII.

Understanding MITRE ATT&CK Framework

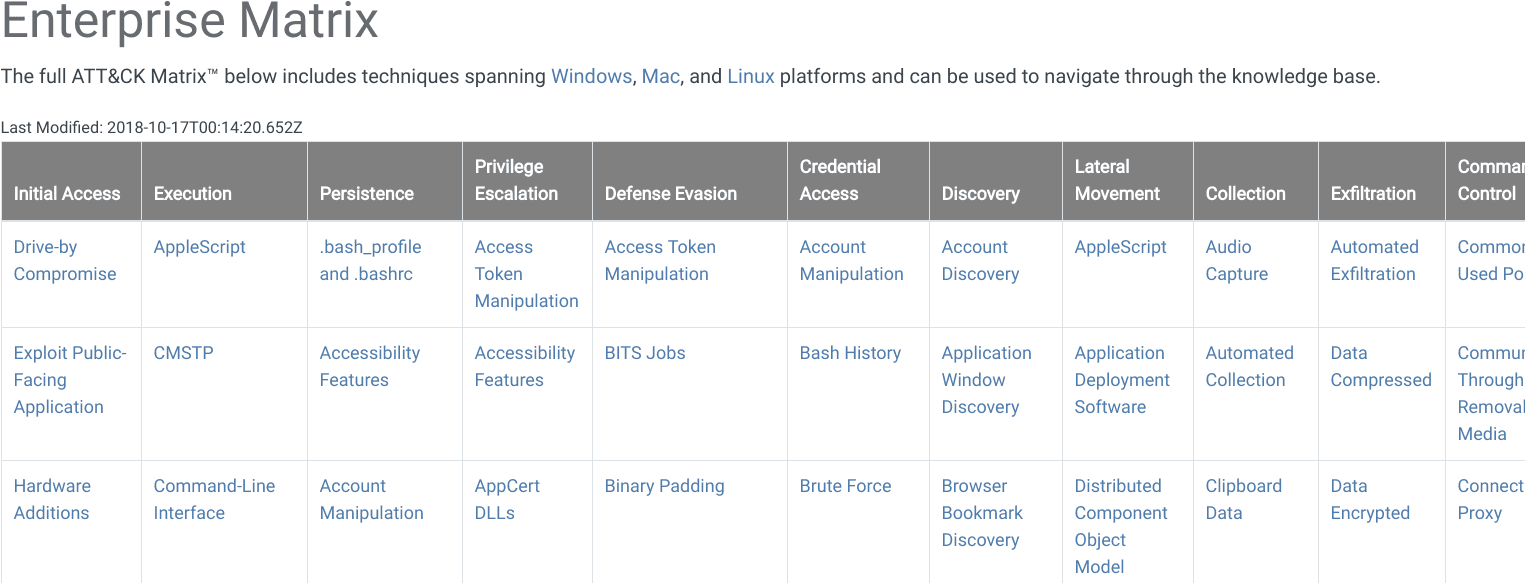

The main matrix used by most security teams is the Enterprise matrix

Additional matrices include:

Each of these matrices contains various tactics and techniques associated with that matrix’s subject matter.

The Enterprise matrix is made of techniques and tactics that apply to Windows, Linux, and/or MacOS systems. Mobile contains tactics and techniques that apply to mobile devices.

PRE-ATT&CK contains tactics and techniques related to what attackers do before they try to exploit a particular target network or system.

The Nuts and Bolts of ATT&CK: Tactics and Techniques Explained

The MITRE ATT&CK framework is built around two core components: tactics and techniques.

- Tactics represent the attacker’s objective, such as gaining access or moving laterally

- Techniques describe how that objective is achieved

For example, “Lateral Movement” is a tactic. Techniques within that category describe the different ways an attacker can move across a network.

This structure helps teams understand both the goal and the method behind an attack.

When looking at ATT&CK in the form of a matrix, the column titles across the top are tactics and are essentially categories of techniques.

Tactics are the what attackers are trying to achieve, whereas the individual techniques are the how they accomplish those steps or goals.

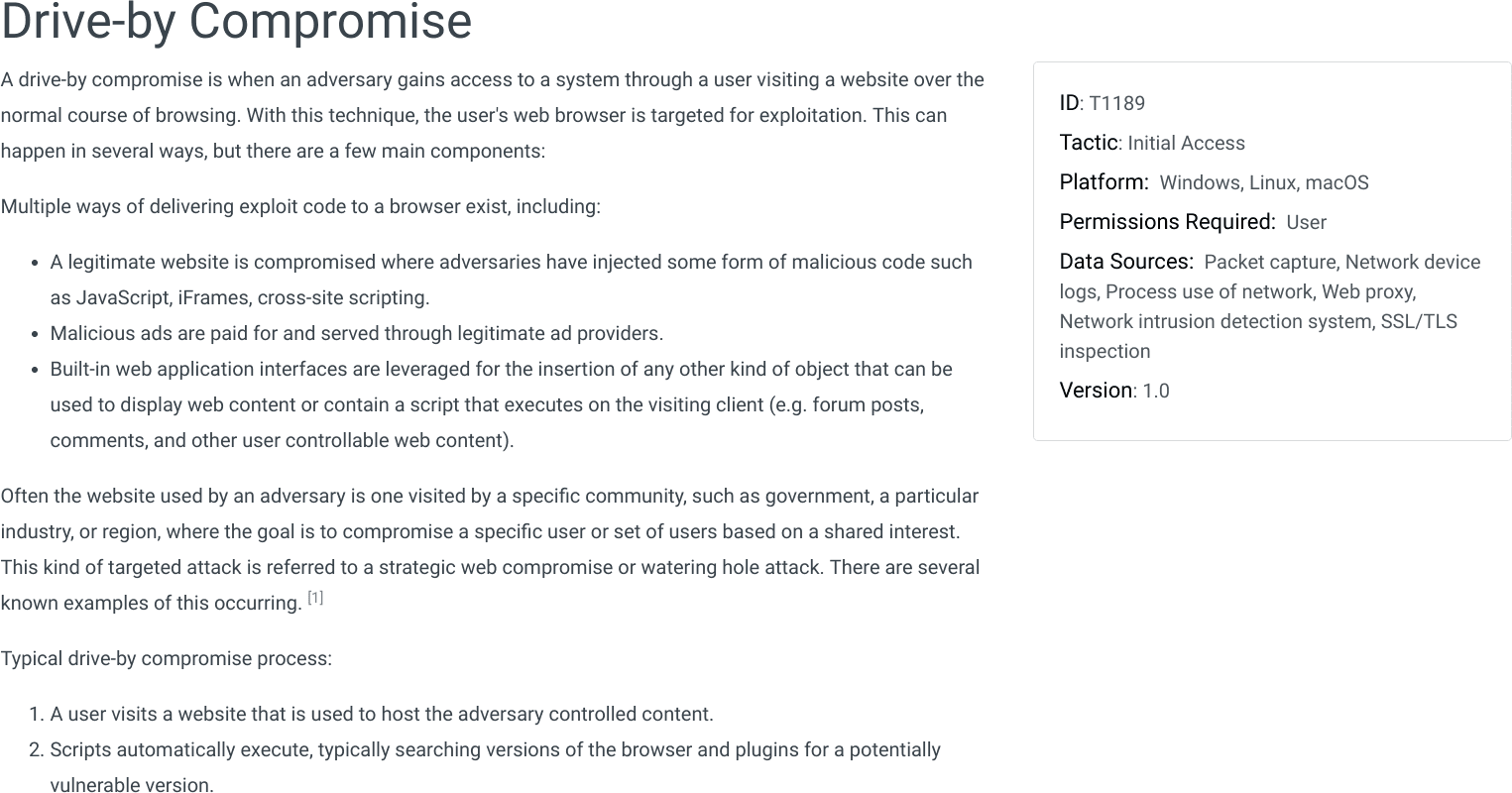

For example, one of the tactics is Lateral Movement. In order for an attacker to successfully achieve lateral movement in a network, they will want to employ one or more of the techniques listed in the Lateral Movement column in the ATT&CK matrix.A technique is a specific behavior to achieve a goal and is often a single step in a string of activities employed to complete the attacker’s overall mission. ATT&CK provides many details about each technique, including a description, examples, references, and suggestions for mitigation and detection.

ATT&CK helps map how attackers move through each stage of an attack using specific techniques.

For example, an attacker might:

- Gain access through phishing

- Escalate privileges on a system

- Steal credentials

- Establish persistence

- Move laterally across the network

Each step maps to a tactic, with specific techniques used to carry out the action.

As an example of how tactics and techniques work in ATT&CK, an attacker may wish to gain access into a network and install cryptocurrency mining software on as many systems as possible inside that network. In order to accomplish this overall goal, the attacker needs to successfully perform several intermediate steps.

First, gain access to the network – possibly through a Spearphishing Link. Next, they may need to escalate privilege through Process Injection. Now they can get other credentials from the system through Credential Dumping and then establish persistence by setting the mining script to run as a Scheduled Task. With this accomplished, the attacker may be able to move laterally across the network with Pass the Hash and spread their coin miner software on as many systems as possible.

In this example, the attacker had to successfully execute five steps – each representing a specific tactic or stage of their overall attack: Initial Access, Privilege Escalation, Credential Access, Persistence, and Lateral Movement. They used specific techniques within these tactics to accomplish each stage of their attack (spearphishing link, process injection, credential dumping, etc.).

The Differences Between PRE-ATT&CK and ATT&CK Enterprise

PRE-ATT&CK and ATT&CK Enterprise combine to form the full list of tactics that happen to roughly align with the Cyber Kill Chain. PRE-ATT&CK mostly aligns with the first three phases of the kill chain: reconnaissance, weaponization, and delivery. ATT&CK Enterprise aligns well with the final four phases of the kill chain: exploitation, installation, command & control, and actions on objectives.

MITRE ATT&CK and Threat Intelligence

Threat intelligence becomes more actionable when mapped to MITRE ATT&CK techniques.

Instead of listing indicators alone, intelligence tied to ATT&CK helps teams understand what an attacker is trying to do and what to look for next.

Explore how intelligence feeds support detection here.

Frequently Asked Questions

What is MITRE ATT&CK used for?

MITRE ATT&CK is used to understand and map attacker behavior. Security teams use it to improve detection, guide investigations, and measure how well their defenses cover real-world attack techniques.

What are tactics and techniques in MITRE ATT&CK?

Tactics represent the attacker’s objective, while techniques describe how that objective is achieved. Together, they map each stage of an attack.

Is MITRE ATT&CK a tool or a framework?

MITRE ATT&CK is a framework, not a tool. It is used alongside security platforms like SIEM, SOAR, and threat intelligence systems.